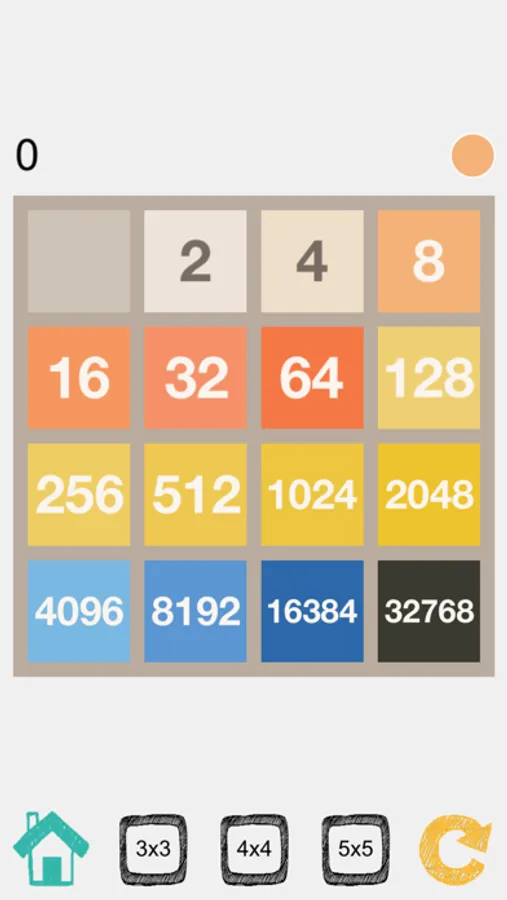

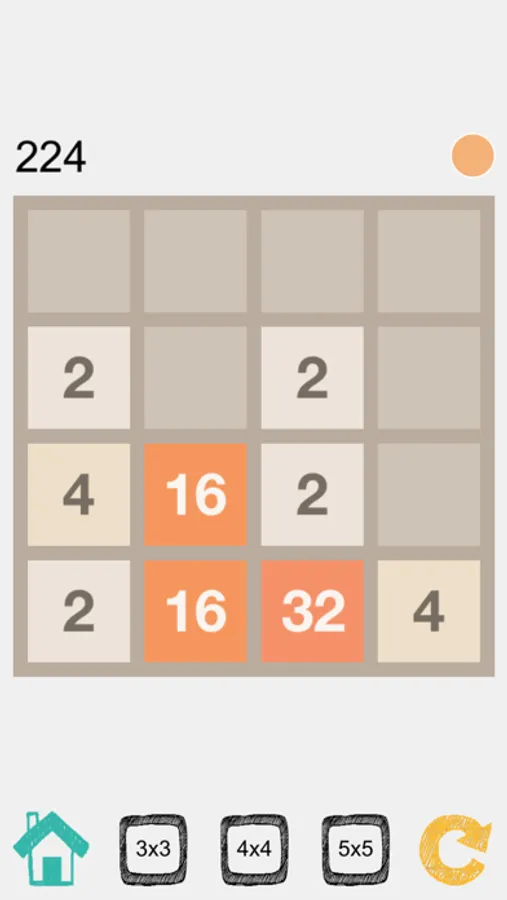

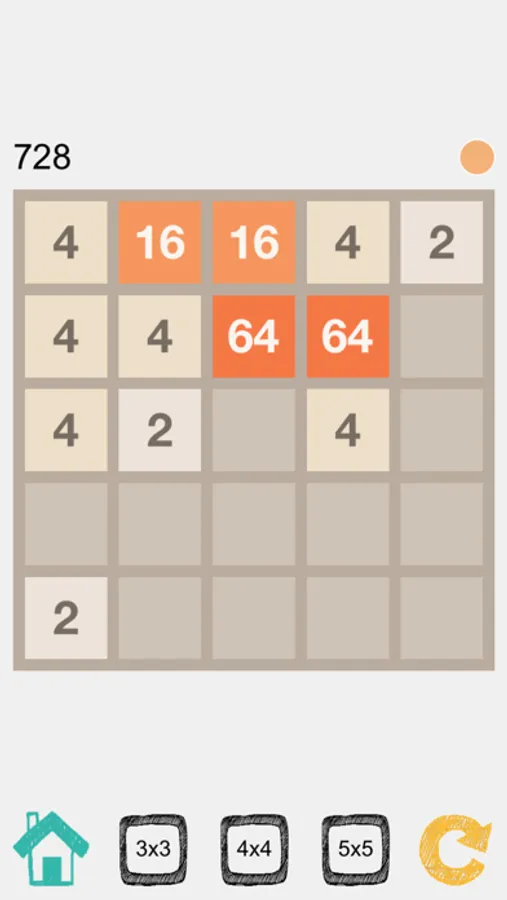

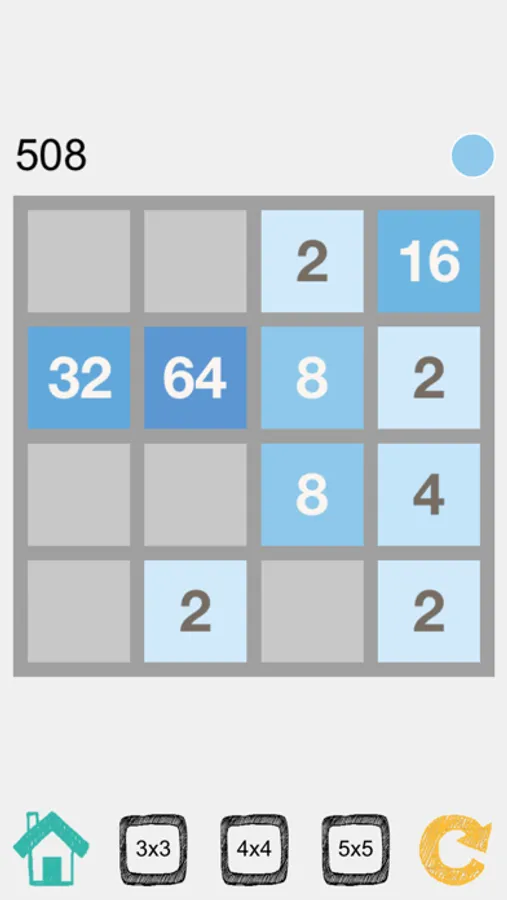

In this puzzle game, players slide tiles to combine numbers and reach target scores. Includes AI-powered move suggestions and multiple game modes.

AppRecs review analysis

AppRecs rating 4.1. Trustworthiness 74 out of 100. Review manipulation risk 28 out of 100. Based on a review sample analyzed.

★★★★☆

4.1

AppRecs Rating

Ratings breakdown

5 star

82%

4 star

8%

3 star

6%

2 star

1%

1 star

3%

What to know

✓

Low review manipulation risk

28% review manipulation risk

✓

Credible reviews

74% trustworthiness score from analyzed reviews

⚠

Ad complaints

Many low ratings mention excessive ads

About 2048 (3x3, 4x4, 5x5) AI

Classic 2048 puzzle game redefined by AI.

Our 2048 is one of its own kind in the market. We leverage multiple algorithms to create an AI for the classic 2048 puzzle game.

* Redefined by AI *

We created an AI that takes advantage of multiple state-of-the-art algorithms, including Monte Carlo Tree Search (MCTS) [a], Expectimax [b], Iterative Deepening Depth-First Search (IDDFS) [c] and Reinforcement Learning [d].

(a) Monte Carlo Tree Search (MCTS) is a heuristic search algorithm introduced in 2006 for computer Go, and has been used in other games like chess, and of course this 2048 game. Monte Carlo Tree Search Algorithm chooses the best possible move from the current state of game's tree (similar to IDDFS).

(b) Expectimax search is a variation of the minimax algorithm, with addition of "chance" nodes in the search tree. This technique is commonly used in games with undeterministic behavior, such as Minesweeper (random mine location), Pacman (random ghost move) and this 2048 game (random tile spawn position and its number value).

(c)Iterative Deepening depth-first search (IDDFS) is a search strategy in which a depth-limited version of DFS is run repeatedly with increasing depth limits. IDDFS is optimal like breadth-first search (BFS), but uses much less memory. This 2048 AI implementation assigns various heuristic scores (or penalties) on multiple features (e.g. empty cell count) to compute the optimal next move.

(d) Reinforcement learning is the training of ML models to yield an action (or decision) in an environment in order to maximize cumulative reward. This 2048 RL implementation has no hard-coded intelligence (i.e. no heuristic score based on human understanding of the game). There is no knowledge about what makes a good move, and the AI agent "figures it out" on its own as we train the model.

References:

[a] https://www.aaai.org/Papers/AIIDE/2008/AIIDE08-036.pdf

[b] http://www.jveness.info/publications/thesis.pdf

[c] https://cse.sc.edu/~MGV/csce580sp15/gradPres/korf_IDAStar_1985.pdf

[d] http://rail.eecs.berkeley.edu/deeprlcourse/static/slides/lec-8.pdf

Our 2048 is one of its own kind in the market. We leverage multiple algorithms to create an AI for the classic 2048 puzzle game.

* Redefined by AI *

We created an AI that takes advantage of multiple state-of-the-art algorithms, including Monte Carlo Tree Search (MCTS) [a], Expectimax [b], Iterative Deepening Depth-First Search (IDDFS) [c] and Reinforcement Learning [d].

(a) Monte Carlo Tree Search (MCTS) is a heuristic search algorithm introduced in 2006 for computer Go, and has been used in other games like chess, and of course this 2048 game. Monte Carlo Tree Search Algorithm chooses the best possible move from the current state of game's tree (similar to IDDFS).

(b) Expectimax search is a variation of the minimax algorithm, with addition of "chance" nodes in the search tree. This technique is commonly used in games with undeterministic behavior, such as Minesweeper (random mine location), Pacman (random ghost move) and this 2048 game (random tile spawn position and its number value).

(c)Iterative Deepening depth-first search (IDDFS) is a search strategy in which a depth-limited version of DFS is run repeatedly with increasing depth limits. IDDFS is optimal like breadth-first search (BFS), but uses much less memory. This 2048 AI implementation assigns various heuristic scores (or penalties) on multiple features (e.g. empty cell count) to compute the optimal next move.

(d) Reinforcement learning is the training of ML models to yield an action (or decision) in an environment in order to maximize cumulative reward. This 2048 RL implementation has no hard-coded intelligence (i.e. no heuristic score based on human understanding of the game). There is no knowledge about what makes a good move, and the AI agent "figures it out" on its own as we train the model.

References:

[a] https://www.aaai.org/Papers/AIIDE/2008/AIIDE08-036.pdf

[b] http://www.jveness.info/publications/thesis.pdf

[c] https://cse.sc.edu/~MGV/csce580sp15/gradPres/korf_IDAStar_1985.pdf

[d] http://rail.eecs.berkeley.edu/deeprlcourse/static/slides/lec-8.pdf

2048 (3x3, 4x4, 5x5) AI Screenshots

Tap to Rate:

Reviews for 2048 (3x3, 4x4, 5x5) AI

Pretty Sally4535

Lot’s of games

In 2048, it’s not just that they’re more other games if you don’t feel like playing 2048.

Mija81

fun

it’s fun