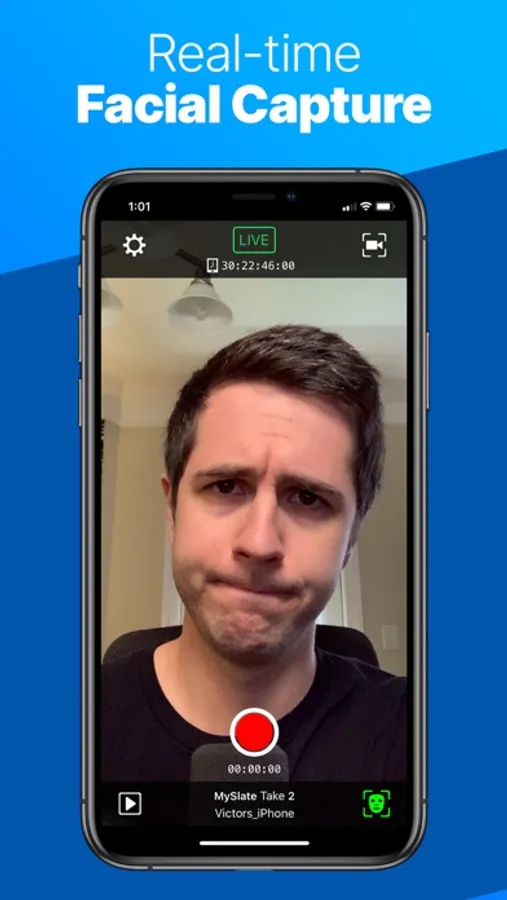

In this app, you can capture facial performances through your iPhone or iPad to animate characters in Unreal Engine. Includes real-time streaming, depth data transmission, and support for multi-device synchronization.

AppRecs review analysis

AppRecs rating 3.5. Trustworthiness 76 out of 100. Review manipulation risk 22 out of 100. Based on a review sample analyzed.

★★★☆☆

3.5

AppRecs Rating

Ratings breakdown

5 star

50%

4 star

13%

3 star

5%

2 star

5%

1 star

27%

What to know

✓

Low review manipulation risk

22% review manipulation risk

✓

Credible reviews

76% trustworthiness score from analyzed reviews

⚠

High negative review ratio

32% of sampled ratings are 1–2 stars

About Live Link Face

Capture facial performances for MetaHuman Animator:

- Live Link Face supports both real-time and processed animation.

- MetaHuman Animator uses Live Link Face to capture performances, then applies its own processing to create high-fidelity facial animation for MetaHumans.

- The Live Link Face app captures raw video and depth data, which can be transmitted directly from your device into Unreal Engine for use with the MetaHuman plugin.

- Facial animation created with MetaHuman Animator can be applied to any MetaHuman character in just a few clicks.

- This workflow requires an iPhone (12 or above) and a desktop PC running Windows 10/11.

Real-time animation for MetaHumans:

- The Live Link Face app generates animation to drive a MetaHuman Character in real time.

- Animation data is streamed to Unreal Engine over a network using the MetaHuman Live Link Plugin.

- This workflow requires an iPhone (12 or above) and a desktop PC running Windows 10/11.

Real-time animation for non-MetaHuman characters:

- Stream out ARKit animation data live to an Unreal Engine instance via Live Link over a network.

- Visualize facial expressions in real time with live rendering in Unreal Engine.

- Drive a 3D preview mesh, optionally overlaid over the video reference on the phone.

- Record the raw ARKit animation data and front-facing video reference footage.

- Tune the capture data to the individual performer and improve facial animation quality with rest pose calibration.

Timecode support for multi-device synchronization:

- Select from the system clock, an NTP server, or use a Tentacle Sync device to connect with a master clock on stage.

- Video reference is frame accurate with embedded timecode for editorial.

Control Live Link Face remotely with OSC or via the MetaHuman Plugin for Unreal Engine:

- Trigger recording remotely so actors can focus on their performances.

- Capture slate names and take numbers consistently.

- Extract data for processing and storage.

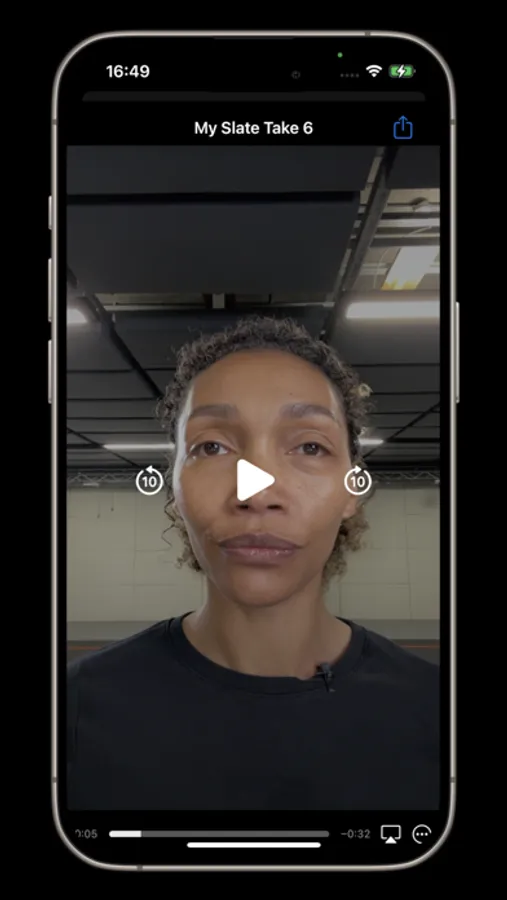

Browse and manage the library of captured takes:

- Delete takes within Live Link Face, share via AirDrop.

- Transfer directly over network when using MetaHuman Animator.

- Review captured footage on-device.

Live Link Face Screenshots

Tap to Rate:

Reviews for Live Link Face

Aaron Gottfried

This doesn’t work

I have tried everything, keep getting missing data when trying to use depth feature. I have iPhone 12 Pro Max which should work.

Low light face tracking please

Horrible low light performance in ARKit mode

ARKit supports using the infrared camera and dot projector in the true depth camera. Being able to use these would be very useful for face and eye tracking in environments with poor lighting. However, this app does not support using those tools in ARKit mode. (Which I need for blendshape based face tracking)